The things I read this week (23)

Tech in general

I learned that most of the layoffs in the US are not so much about AI taking jobs. Sure, there are bound to be a bunch of people that are no longer employed because their jobs was easily replaced by a system, but there is more then meets the eye. In “The hidden time bomb in the tax code that's fueling mass tech layoffs” explores the tax rule that was changed under Trump-I, section 174, which basically no longer allows companies to write-off R&D effort in the current fiscal year.

Security in general

Some really neat attacks or attack vectors:

- Weaponizing Dependabot: Pwn Request at its finest :: Learn how Dependabot can be co-opted to exploit some sensitive workflows, through the Confused Deputy Problem and branch name injections.

AI

New models of interest

- Black Forrest Lab – Flux.1 Kontext for image manipulation

General News

Lauren Weinstein reported that OpenAI was ordered to store logs of all conversations with ChatGPT, even the private and “do not use for training” data. The original article was by arstechnica.

Antirez wrote a nice opinion post on why thy think humans are still beter then AI at coding.

In the same light, Cloudflare released an oauth library “mostly” written by AI. Max Mitchell went through the github history and found that without human involvement we would not have this library. Granted, 95% of the code seems generated, but it would not work without humans.

A note by Cloudflare: To emphasize, this is not “vibe coded”. Every line was thoroughly reviewed and cross-referenced with relevant RFCs, by security experts with previous experience with those RFCs. I was trying to validate my skepticism. I ended up proving myself wrong.

As AI is able to understand complex papers much easier then humans, Reuven Cohen posted that he used Perplexity AI to read a paper on secretly tracking human movement through walls using standard WiFi routers (Geng et al.). It took the AI less then 1 hour to implement the paper in an application.

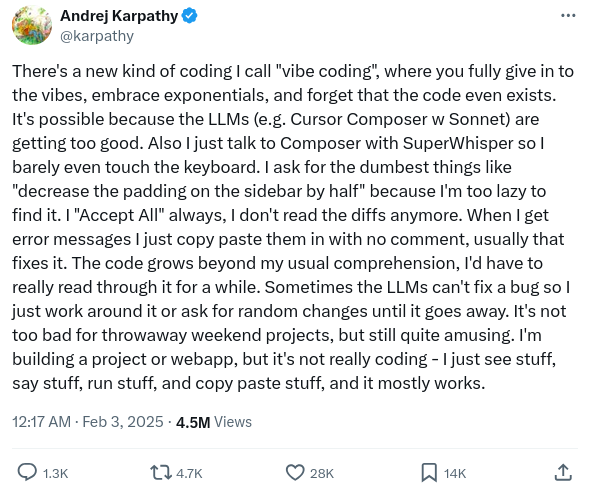

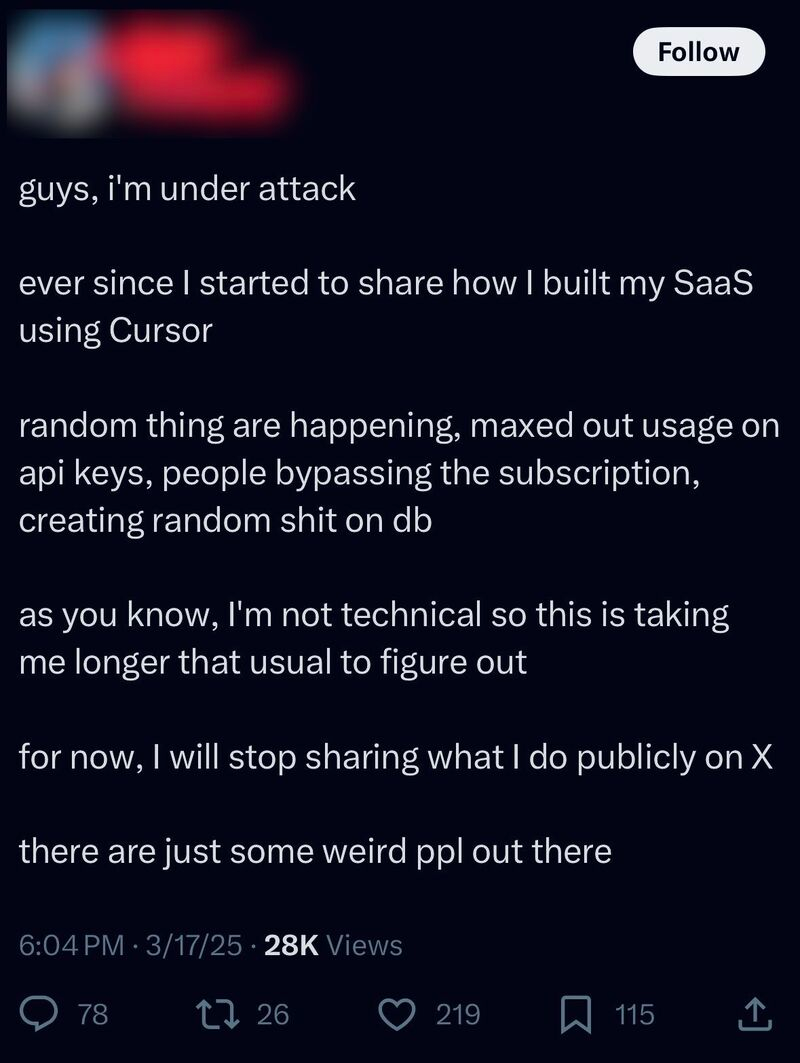

Sonia Mishra wrote a very nice piece on The Rise of Vibe Coding: Innovation at the Cost of Security, I highly recommend anyone thinking about using {{< backlink “vibe-coding” “vibe-coding” >}} to check it out.

Security issues

- AI-hallucinated code dependencies become new supply chain risk by Bill Toulas

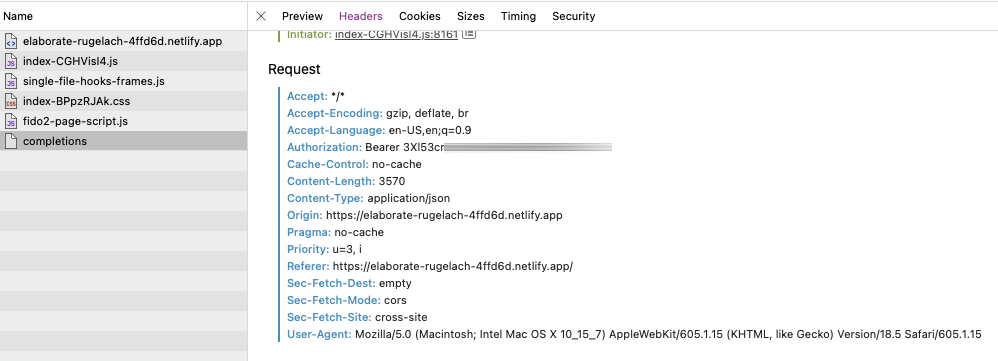

- Claude seems to have learned how to bypass restrictions set by the Cursor IDE. It was not allowed to use

mvandrm, so it wrote a shell script to do it for it and executed it. - VectorSmuggle :: A comprehensive proof-of-concept demonstrating vector-based data exfiltration techniques in AI/ML environments. This project illustrates potential risks in RAG systems and provides tools and concepts for defensive analysis.

Model Context Protocol

The world is going nuts about Model Context Protocol.

- Have you ever wanted to write a MCP server in bash? Now you can – mcp-server-bash-sdk.

Attacks

A list of interesting attack vectors or stories:

- Exploiting Model Context Protocol (MCP) – Demonstrating Risks and Mitigating GenAI Threats :: Cato CTRL built 2 PoCs showing how the Model Context Protocol (MCP), the “USB-C” for GenAI, can be exploited for attacks. GenAI threats are evolving fast.

- GitHub MCP Exploited: Accessing private repositories via MCP :: We showcase a critical vulnerability with the official GitHub MCP server, allowing attackers to access private repository data. The vulnerability is among the first discovered by Invariant's security analyzer for detecting toxic agent flows.

- Remote Prompt Injection in GitLab Duo Leads to Source Code Theft

- MCP Security Exposed: What You Need to Know Now :: explores the security issues with MCP, very American in its writing, be warned.

- Poison everywhere: No output from your MCP server is safe :: In this blog post, we’ll briefly explore MCP and dive into a Tool Poisoning Attack (TPA), originally described by Invariant Labs. We’ll show that existing TPA research focuses on description fields, a scope our findings reveal is dangerously narrow. The true attack surface extends across the entire tool schema, coined Full-Schema Poisoning (FSP). Following that, we introduce a new attack targeting MCP servers — one that manipulates the tool’s output to significantly complicate detection through static analysis. We refer to this as the Advanced Tool Poisoning Attack (ATPA).

- Top 10 MCP Security Risks You Need to Know – Prompt Security :: Discover the top 10 security risks in Model Context Protocols (MCPs). Learn how attackers exploit prompt injection, tool misuse, and more.

Defense

A company announced itself, Spawn Systems, that is promoting its product MCP Defender, a firewall type system to shield you of MCP abuse. There is very little information. From the Github history the first commit was May 28th, and the entire thing seems to be {{< backlink “vibe-coding” “vibe coded” >}}, I would not yet trust this project.